Abhinav Grover

Master's Student (2021)Department: Alumni

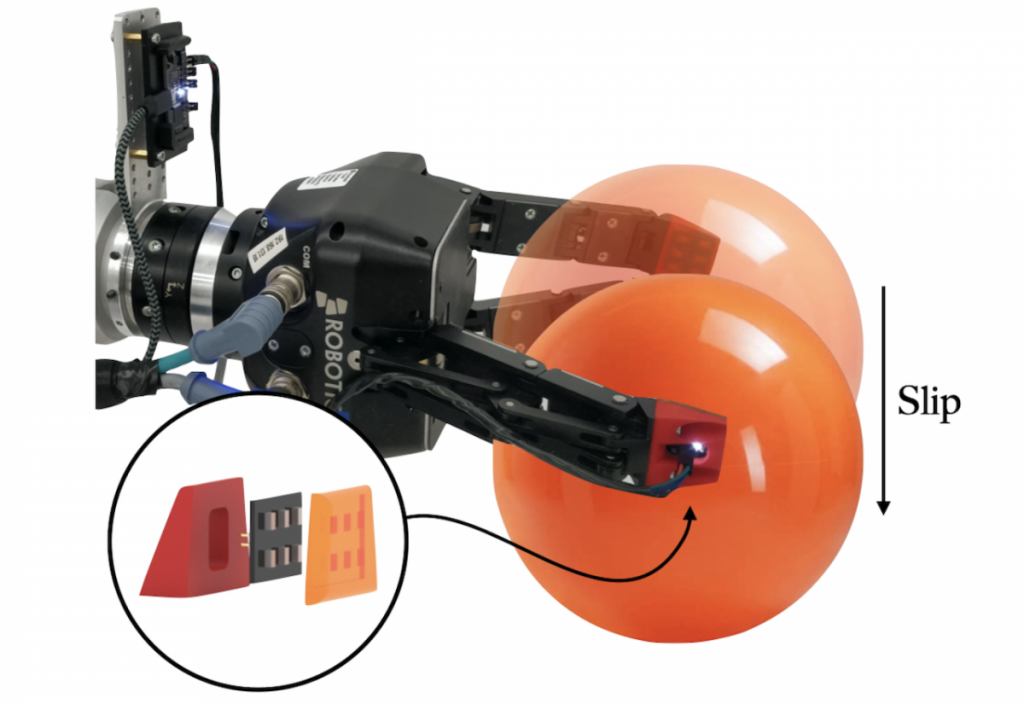

The ability to perceive object slip through tactile feedback allows humans to accomplish complex manipulation tasks. Tactile signals provide vital information about slip faster than any exteroceptive perception method such as vision. Slip can be both disastrous (e.g., when transporting a fragile object) and advantageous (e.g., when moving an object without lifting it) depending on the context. For robots, however, detecting slip from tactile data remains challenging. This is due, in part, to the limited range of tactile sensors available and to the nature of tactile signal transduction.

Abhinav explored a learning-based method to detect slip using barometric tactile sensors. These sensors have many desirable properties; they are durable, highly reliable, and built from inexpensive components. He collected a novel tactile dataset and trained a temporal convolutional neural network to detect slip events. When tested on two robot manipulation tasks involving a variety of common objects, the detector demonstrated generalization to previously unseen objects. This is the first time that barometric tactile sensing technology, combined with data-driven learning, has been applied to slip detection.